Kanad Chakrabarti

Primary page content

Kanad Chakrabarti's MPhil/PhD Art research project

Reasons for Persons: The Good Successor Question

For most people, it is an intuitively plausible and seemingly non-controversial assertion that humanity and human values are in some sense objective and worthy of preservation. However, against the current background of accelerating technical progress, some respected AI researchers note that eventually, assuming powerful-enough AI, humans may become a ‘second species’ on Earth (perhaps before going extinct) or else ‘evolve’ into a successor species, which might be purely artificial or some biological-artificial combination.

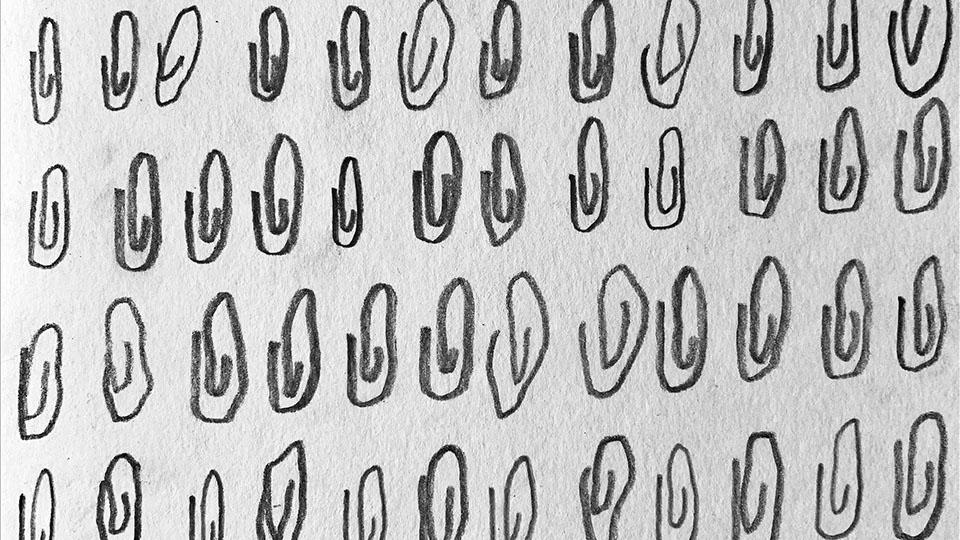

untitled (2024), graphite on paper

Yet there seems to be little writing that justifies the specialness of humanity from an impersonal, species-neutral perspective, particularly in the context of Artificial General Intelligence (AGI) or Artificial Superintelligence (ASI), which some researchers believe may arrive within a decade. This question is important because, whether one fears human extinction at the hands of AI, or welcomes humanity’s potential evolution into some other form, we are not sure in what sense, or for whom, this would be ‘good’ or ‘bad’. Moreover, having clarity on the issues involved might also be useful if and when AIs become morally relevant.

I explore this question in the context of the philosophy of AI and the growing literature on the moral status of non-human animals and ecosystems, including relevant writing around post/transhumanism, extraterrestrial life, and the Simulation Argument. From an empirical perspective, I am interested in understanding potential interactions within AI societies and between AIs and humans, given the unique game-theoretic challenges that seem to be present. A starting intuition is that the value questions above might have a partial answer in humanity’s propensity for purposeless creativity (that is, artistic production, expressed across billions of diverse subjectivities), and I hope to probe this topic within language model-driven multi-agent environments.

Supervisors

- Audrey Samson

- Daniel Rourke

- Edgar Schmitz